It’s not a common practice, but it’s something that Spark drivers should be aware of. Unfortunately, that means tip baiting is possible-a customer can lure you with a great tip and remove it afterward. You can screen out non-tipping, low-paying orders-A low upfront estimate means that the customer didn’t tip during checkout, and customers are less likely to tip after the order is over.Ĭustomers have 24 hours to adjust their tip, so it’s possible that a tip can be increased or decreased. Customers can also tip after the delivery is complete. Customers can include a tip during checkout, and any tip left before the delivery will be included in the estimated payout. Spark drivers receive 100% of any tips left by customers. Most other gig apps offer ways to cash out daily, so Spark has some catching up to do when it comes to payment flexibility. There isn’t currently a way to cash out your Spark earnings daily. Transfers from Branch to a bank account are free if you choose the option that takes 3–5 business days, but there is a 2% fee for instant transfers. Spark drivers are paid each Tuesday through the Branch Wallet, an electronic payment service that all drivers have to set up during the enrollment process. Credit LaxxedOtter on Reddit Getting paid on Spark: The Branch Wallet I recommend also trying this WordEmbeddings model instead of BertEmbeddings and see the difference.Order offers show estimated payout, store location, order type, and distance. I used glove_840B_300 for multilingual, this embeddings seemed to be very accurate for the majority of the multilingual NER models. I never needed anything more than 128G memory. I trained all the multi-lingual NerDL models which come from WikiNER datasets that have between 80K to 90K training examples each. I am interested to see by saving and loading the embeddings (like the example) how much will be the final memory usage. This happens in a distributed manner, then you can load the dataset back and go ahead with the training.

First, transform the dataset and save it on disk. I suggest following the checkpoints strategy. You are using a very large and complicated Word Embeddings bert_multi_cased.

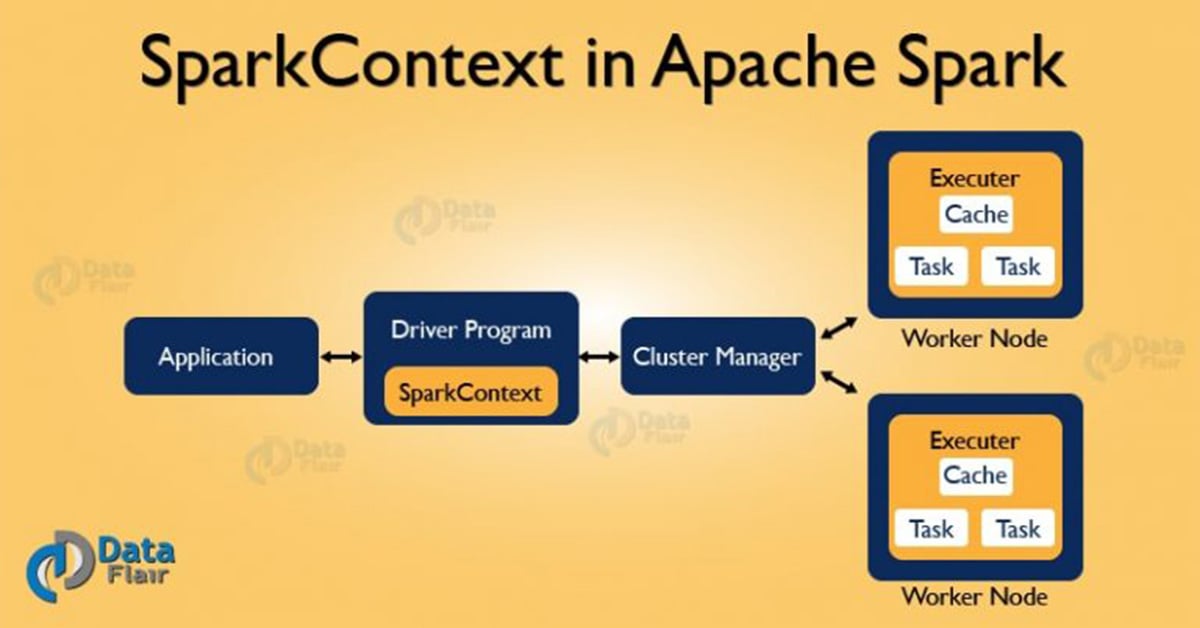

There cannot be distributed training due to the complexity of the algorithm and Spark limitation. Training always happens in 1 machine which in Apache Spark means the Driver. SetInputCols("document", "token").setOutputCol("embeddings") ` val glove_embeddings = BertEmbeddings.pretrained(name = "bert_multi_cased", lang = "xx").

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed